I discovered the creative exhilaration of programming at a formative age. Yet all along I have also felt stifled by primitive, ill-suited languages and tools. We are still in the Stone Age of programming. My quest is to better understand the creative act of programming, and to help liberate it. Reflection upon my own practice of programming has led me to three general observations:

- Programming is mentally overwhelming, even for the smartest of us. The more I program, the harder I realize it is.

- Much of this mental effort is laborious maintenance of the many mental models we utilize while programming. We are constantly translating between different representations in our head, mentally compiling them into code, and reverse-engineering them back out of the code. This is wasted overhead.

- We have no agreement on what the problems of programming are, much less how to fix them. Our field has little accumulated wisdom, because we do not invest enough in the critical self-examination needed to obtain it: we have a reflective blind-spot.

From these observations I infer three corresponding propositions:

- Usability should be the central concern in the design of programming languages and tools. We need to apply everything we know about human perception and cognition. It is a matter of “Cognitive Ergonomics”.

- Notation matters. We need to stop wasting our effort juggling unsuitable notations, and instead invent representations that align with the mental models we naturally use.

- The benefits of improved programming techniques can not be easily promulgated, since there is no agreement on what the problems are in the first place. The reward systems in business and academics further discourage non-incremental change.

What is the future of programming? I retain a romantic belief in the potential of scientific revolution. To recite one example, the invention of Calculus provided a revolutionary language for the development of Physics. I believe that there is a “Calculus of programming” waiting to be discovered, which will analogously revolutionize the way we program. Notation does matter.

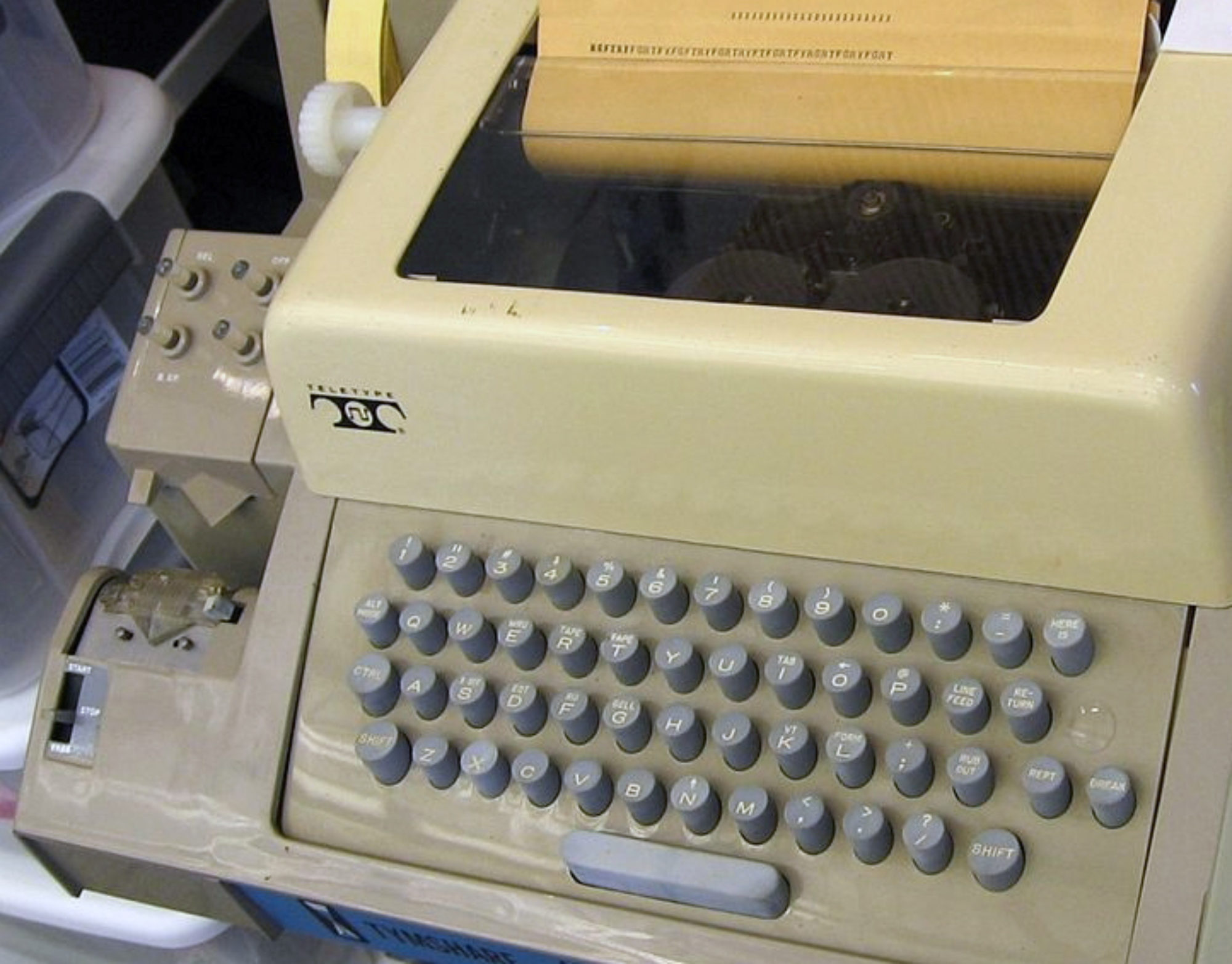

Our current choice of notation leaves much to be desired: ASCII strings encoding structure through grammars and names. We have automated many complex information artifacts, from documents to diagrams, and we have built many complex data models and user interfaces for them. From this perspective, it is absurd that programs are still just digitized card decks, and programming is done with simulations of keypunch machines. The move from keypunches to on-screen editing was a huge leap forward, but that was 30 years ago. We have not yet taken the next logical step, which is to represent programs with the complex information structures made possible by computers.

The guiding goal I propose above is programmer usability. One way to discover how to make programming more usable is to look for clues in what currently feels the most difficult and unnatural when we program. Here are some of the clues I am investigating:

- We often think of examples to help understand programs. Programs are abstractions, and abstract thinking is hard. Across all fields, examples help us learn and work with abstractions. Example centric programming is my proposal to integrate tool support for examples throughout programming. Going further, I am trying to reduce the level of abstraction required in the first place with prototype-based semantics and direct-manipulation programming.

- We tend to build code by combining and altering existing pieces of code. Copy & paste is ubiquitous, despite universal condemnation. In terms of clues, this is a smoking gun. I propose to decriminalize copy & paste, and to even elevate it into the central mechanism of programming.

- The dominant programming languages all adopt the machine model of a program counter and a global memory. Control flow provides conditional execution, and allows memory accesses (side-effects) to be serialized. The problem is that control flow places a total order on the actions of the program, while both conditionals and side-effects are inherently partially ordered. One condition arises when a specific combination of other conditions arise. Likewise we care that a data read occurs only after a specific set of data writes. Control flow languages force us to do a topological sort in our head to compile these relationships into a linear execution schedule. What’s worse, we later have to reverse engineer the tacit relationships back out of the code. Control flow is a suitable notation only for the back-end of a compiler, and should be considered harmful to humans. Escaping the linear confines of textual notation is a first step towards directly expressing non-linear relationships. To better represent side-effect relationships, I am exploring database-like transactional semantics.

- Our languages are lobotomized into static and dynamic parts: compile-time and run-time. This dichotomy exists solely for ease of compilation and optimization of performance. Modern hardware and compilers have made these concerns unimportant in most situations, yet they live on enshrined in the design of our languages. There should be no difference between run-time and edit-time. More generally, we must discipline ourselves to ignore the siren call of performance, keeping the tiller pointed towards usability.

- We can roughly categorize many mental models into two types: structural and behavioral. Some cognitive scientists trace this division to specializations for vision and communication, respectively. Our programming languages are highly biased about these two representation styles. Generally, we use structural models for static constructs, and we use behavioral models for dynamic constructs. This pattern is seen most clearly in pure OO languages like Smalltalk. We can not afford to program with one hand tied behind our back: we need to integrate the use of structural and behavioral models throughout programming. To better frame the issue, I am exploring the “dual†of OO, in which the encapsulation of behavior is replaced by the publication of reactive structure.

These clues outline a long-term research program, in which I hope to collaborate with others of like mind. The first steps can be seen at subtext.

Leaping ahead, let us suppose for the moment that we have invented a revolutionary new way to program. How can we get programmers to use it, academics to research it, and entrepreneurs to invest in it? As discussed earlier, there are deeply entrenched obstacles to change, both psychological and institutional. The endless cycle of hype and disappointment has hardened cynicism. I am afraid that we have reached the point where talking is useless.

I can think of only one strategy to break this deadlock: lead by example. We need to get our hands dirty and build real code for real users, and do it better-faster-cheaper. Nothing succeeds like success. Thinking even further out, perhaps we could establish something like a teaching hospital: a non-profit organization that combines programming for real clients with a mission of education and research. In the meantime, the first job is the inventing.

—–

[Welcome Diggers. Before you comment on this old post, I suggest you take a look at what I have done in the meantime. The best summary is in my last OOPSLA paper.]

The main reason that software is so unreliable and so hard to develop has to do with a custom that is as old as the computer: the practice of using the algorithm as the basis of software construction There is a solution to these problems, but it’s not a new language, it’s a new paradigm. We must adopt a synchronous, signal-based software model.

http://www.rebelscience.org/Cosas/Reliability.htm

Do you know what I imagine would be really retarded? You write something insightful and valuable, and post it to your blog in, say, December of 2004… and nobody reads it, nay, nobody gives a shit.

Fast forward to, for example, September of 2006, almost two years after the thing is originally posted, almost one year after you’d forgotten all about it, and somebody decides to link to your post on a community news site, for example, digg.com

And suddenly, your little post gets 40 comments in a single day, not a single one realizing that you’d long since moved on with your life, completely ignoring the fact that there had been only one comment in over a year.

Yeah, I think that’d be pretty retarded.

Hi.

Some er, “interesting” points!

I tend not to agree – here is why: http://mattbloguk.blogspot.com/2006/09/future-of-programming.html

Given the rationale here, I expected the punch line/solution to be: ‘Visual IDEs’–after all, didn’t Visual Basic address many of these same concerns?

People often seem to loose sight of the weakest link in pretty much any system in existence…People. On a cognitive level people use heuristics to cope with day to day problems. Heuristics implies not considering all options, mental accounting if you will. It is this that flaws us, as we can seldom achieve anything in a reproducable manner. Try drawing requirements from a user. The user is aware that a problem exists, but they dont know what the problem is, let alone trying to come up with a solution to the problem. Forcing one to think in fixed constructs advocates eliminating the heuristics that allow us to express problems in an ambiguous fashion. My thoughts on producing better systems faster is human education. Teaching people to think in a stepwise system fashion will ultimately lead to them understanding what they are trying to achieve. Once this has been defined programming merely becomes the tool to bring the vision to fruition. I dont discredit attempts to streamline programming, infact most of my work is of this nature, but experience has taught me the micro-refinement of processes is going to have little impact when the other side of the spectrum is so fundamentally flawed.

Bear this in mind, if we ever reach processing speeds equal to the speed of thought, and we model our development efforts around human brain patterns, then at best our system will only perform as effectively as a human manually crunching away at the problem.

These are interesting ideas, but:

* Are you sure you know the best of 2006 ? Unless you are fluent in at least Ruby, Lisp, Smalltalk, Java IDEs, functional programming, test-driven development, and aspect-oriented programming then I’d take a guess that maybe not 🙂 Maybe the problem is already solved, it simply takes time before the solution is known 🙂

* What is the next action ? There is little hope for long-term plans, unless the very first step is already paying back a lot.

You may be interested in my (much more short term) ideas – http://t-a-w.blogspot.com/2006/07/programming-language-of-2010.html

Wrong, completely wrong. Fred Brooks would be turning in his grave, if he knew that 20 years after “No Silver Bullet” still no understanding has emerged among programmers. Here’s the most obvious points:

– “The mental models we normally use” are woefully inadequate for programming. It’s not a matter of notation, it’s inherent in the task. We’ll have to live with it.

– To communicate complicated ideas, humans developed language. Little children point at things and grunt, after about 1-2 years they begin to see the advantage of language and use it. ASCII is quite capable of representing this sharp tool, colorful diagrams are not.

– We never understand programs in terms of examples. If you need examples, you haven’t understood the problem. “Example oriented programming” will give you brittle programs full of surprises, or exactly what we have now.

– C&P is a symptom of an inadequate language. Use a good language, build a better abstraction. Advocating rape-and-paste-coding is criminally insane.

– Compiling a program is a good thing, provided I have a good type system. I like to be warned when I try to put round pieces into square holes.

…but you’re right that the C model of programming should be replaced. The problem is, the stone age already ended in the 60s (Lisp) or maybe the 80s (ML, Haskell), but the establishment is still advocating the use of stone knives, because “hardly anyone can understand that new fangled metal stuff.”

Now please go read anything by Fred Brooks or Esdger Dijkstra you can get your hands on, look at when it was written, and weep.

marvin: We have some sort of “example-oriented programming” already. It’s usually called “test driven development” and it works far better than you can imagine unless you seriously tried it.

Basically you write a few examples (test cases), and then just some trivial code that fits with these examples. If the examples are good, the coding is so simple that we can safely disregard it.

i agree that programming should be much simpler and the whole process of engineering software should be as quick and the software could be built while talking about the requirements of it with the customer…

if there is a programming language that is written in the language of thought, then while talking with the customer, (and if the UI of the software is sufficiently user-friendly and intuitive) u can also at the same time prepare the software by displaying the requirements of the user in the language of thought and then those ideas represented in that software would also be programmatically runnable…

thats the idea of the Nelements programming language that is in development…its to help software engineers to develop software in a much more efficient manner….

come on guys stop screwing around. This is real stuff here. Who cares if you cant understand it

Make the comments reasonable guys…

i have great interest in this articel

i also think programing language needs help.

Their are to many options when writing a program, it could take day just to deside wether to write a Nassi-Schneiderman or use Pseudocode. I think the problem lies in no one has stood up and said this is whats going to happen. We need one code with one set of rules, no exceptions like and arow is the same as =. This just confuses people and i am sure once the confusion has been lifted thier will be no need to copy other peaces of code. I am not hear asking for some sort of super code, im asking for a simple strait forward programing code that a matt boltovitch would understand.

I say programing isn’t in the stone age its at a fork in the road. Either people take charge and put together one programing code for all to use or we can forget about or easy programing for i am shore nerds in thier garages and basments will continue makeing useless programs that only the bigst of egos can understand.

And i leave you with this, i am not old or in any position make a big deal about programing because i have just started, but i love my history and i can see if som,thing is not done programing is just going to be so confusing no smiple minded child luiek em could ever understand.

ps your use of language belongs in the sexual romantic book collection on my mums shelf not this web site. Like i said the first step to saving programing is to keep it simple.

ok this is serious guys.

I really like this article because, for one, the author is a good writer, and also because his ideas are pretty well thought out. But I disagree with the idea that we should try and make everything work exactly like our brain. Our brain exists to map concepts from one “world†to another. That’s the job of our brain, to take some abstract concept that doesn’t work exactly like our brain, and combine it with memory to end up with a useful “taskâ€. In the world we live in, comprimises are everywhere. In computer science, this is no different. When operating within the confines of reality, encoding our logic into strings is the best way to accomplish all of our goals. I guess my question for the author is then, if not ASCII strings, then what? Here is the other reason why I think this is somewhat flawed. The day we invent hardware which can bring this “ditch the text-based stuff†idea to reality, the implications of such hardware will likely have such huge affects on the industry that our entire goal and reasons why we have computer science will change, therefore changing the needs of computer programming languages. So it is impossible to imagine a world where programming isn’t done with a keyboard, because everything we know and do with computers uses text-based interfaces.

“We are constantly translating between different representations in our head, mentally compiling them into code, and reverse-engineering them back out of the code. This is wasted overhead.â€

This action takes place in EVERY single learned task humans do. Once again, that’s in the job description of the human brain. I suppose we should get rid of english and try to come with a new language that uses our native brain signals? We are constantly translating english words/sentences into the concepts and ideas they represent, but that doesn’t mean we should get rid of english!

“The dominant programming languages all adopt the machine model of a program counter and a global memory. Control flow provides conditional execution, and allows memory accesses (side-effects) to be serialized.â€

The reason they adapt to the machine is because the people tasked with actually implementing such technologies (as opposed to just writing about their faults) have the daunting task of making it work at a level of performance which is acceptable. COMPRIMISE is the key word here, we needed speed, and we needed the ability to code at a decent speed to actually be able to create software in a reasonable amount of time. This problem still exists today, no matter how much proponents of garbage collected slow-as-snails languages want to tell you. The distance in which you abstract yourself from the machine is directly proportional to the performance hit in which you incur (lose approximation but no one can disagree with the premise). Sure, it would be great if I could just think of a program in my mind and have it all the sudden appear (which is what it sounds like is the goal), but what would separate a computer scientists from a bum if it was so easy? I am against revolution just for the sake of revolution, and the lack of concrete suggestions for improvement places this article right on that line.

My opinion or statement on this is that we arent really in the stone age of programming. How do we know that technology will continue to improve until it reaches peak of ultimate technology that in the press of a button we will all be dead? How do we even know whether or not the world will end before we reach that stage of technological revolutionary of ‘Cognitive Ergonomics’? I really think that where ever we are in the present is the peak of programming. We cannot really judge whether or not we have reached the ultimate peak of programming. P.S Matt Baltovich is a noob

I believe that it’s true that we are waiting for the ‘Calculus of programming’. I think you are onto something with including example central programming, but isn’t this fourth generation language programming?

i entirely agree, i completely fail at programming, your article is well written, thought out and a credit to your name

Very good article it illustrate the point I was tring to make with my class, we had been discussing the generational devlopment of languages and foibles of pseudocode syntax. The fact the constructs of languages force you to solve problems by “un-natural” means. Sorry about matt and fernandez they no longer have internet access for 1 week

He is right. Programming is hard, not because it is abstract but because its abstract in a way that our brains did not evolve to handle with ease.

ASCII as used in programming is difficult – its an abstraction within an abstraction. If we must adapt to find the middle ground between our semantics and the silicon’s (the first) then why not use a symbolic representation that’s easier to work with? I won’t suggest one, just suggest openess to the idea

Finally, I agree that this article doesn’t pay many dues to other work being done in this area, but if all the rest is unaccessible jargon to all, why can’t people of his level suggest a more intuitive, easier to learn programming interface?

Zac McCormick wrote:

“Sure, it would be great if I could just think of a program in my mind and have it all the sudden appear (which is what it sounds like is the goal), but what would separate a computer scientists from a bum if it was so easy?”

Well, instead of writing stupid programs which any bum can do, they’d be spending their time doing research instead. Don’t you think that’d be great? Imagine all the resources that could be spent on computer research instead of being wasted on making computers do a poor imitation of how we want them to work! We’d be building nano-petaHz-computers in no time! On the other hand there have been written a lot of dystopies about computers that can program themselves to take this lightly. But I believe this is the future, whether we like it or not.

Interesting… but… I don’t think we will see it in our life time. but… I hope I’m wrong. I really do. I would love to see some badass new magical way to program. Just not sure how we can abstract these ever changing poblems anymore. I’ve been playing with RDF and OWL, interesting stuff, not the magical stuff you talk about, but a different approach to common problems.

-myunitedstatesofwhatever

read it in a digg. And this seems that we are in an one end of programming. I agree with the above article but the queation is how practical it is where more of the programmers have there hands tied up in traditional programming

nice troll. got you to digg.

“…decriminalize copy & paste, and to even elevate it into the central mechanism of programming…”

looks like clueless troll to me.

but maybe you are not hopeless – you just need learn. Start reading dijkstra: humble programmer. then learn flexible language like python.

Is very interesting http://portaldiscount.com/national-city-mortgage/

Your hard work paid off http://portaldiscount.com/june-carter-cash/

the future of programming is no so clear, but i feel that there are new programming paradigm will comes soon

you can see

1 – Language Oriented Programming (started in 2004)

2- Agent Oriented Programming (started in 1990)

3- Super Server Paradigm (Started in 2006)

http://www.sourceforge.net/projects/doublesvsoop

Eiffel – has one idea which I found to be very powerful.

It is ‘the contract’. The function agrees to honour valid input.

Invalid input is an error.

I used this concept in a OO project written in C as there was not OO language available for the CPU.

By using assertions to check inputs – the system caught the errors.

As a production system I forced the invalid value to a valid value.

AND logged an software error report.

This meant the real time program operated pridictablyand BUG were detected.

Hi. I’m a newbie so I’m not clear what the fuss is about. Y’all have jumped several layers of abstraction away from the computer– yippie! We’re blessed (or cursed, as remains to be fought!) with Moore’s Law, and whatever you call the equivalent trends in disk space and memory, which buys us the right to make deeper abstractions every single year! Yay!

Obviously the way to get further away from the computer is to have the computer do more. Computers, as we know, can do anything. It’s that simple & that difficult– to determine the entire future of our civilization, by deciding what it is we want to make easy.

My guess– or perhaps it’s just a hope– is that rather than a successful challenge being raised to the animate ASCII model within the walls of Programming, what’s going to happen is that a way of programming that’s a paradigm shift easier is going to be taken up by the general population.

Users DO program the computer, you know, folks. It’s just that the only apparent handles given to them for grabbing the computer are the code that YOU write. Y’all hand them so many little domain specific languages– languages where the only commands they can give are the checking or unchecking of a field of “options.” If you observe even the naivest of users, they approach the limited options they’re given in a beautiful hackerly spirit– discerning some level of the internal physics of the system through a series of tests, then setting the device in a state that behaves like they need it to– & by turning a bug into a feature if necessary!

Create powerful abstractions and hand them to the user. For instance: There’s all this fuss trying to make handles that will allow programs to talk to GUIs– ways of programming pretty interfaces. I think we should think seriously about putting those handles the other way. Start making user interfaces that are so adaptable as to be general– or to put it another way, make a domain specific GUI language that’s at a level of abstraction where it can be easily pushed & prodded by the naivest users– resizing panels, choosing color schemes, we’ve already worked out how to make this stuff easy. Everyone who uses a computer is a programmer, and they’ll all start taking over a lot of the real substance of the task as soon as they’re given any opportunity.

Don’t try to fix the language when the problem truly lies in the programmer.

The site looks great ! Thanks for all your help ( past, present and future !)

http://www.sourceforge.net/projects/doublesvsoop

DoubleS Framework for Windows & Microsoft Visual FoxPro.

Programming without Code, yes maybe, year 2008.

Well, did you heard about lissac?

http://isaacos.loria.fr/news.html

Though, still a string based programming language but maybe it has some highlight for your quest.

I think in the future programmers will increasingly use dynamic languages. You already see this now: everyone seems to be migrating to Ruby, which is more or less Lisp minus macros. And Perl 6, from n what I’ve heard, seems to be even more Lisplike. It’s even going to have continuations.