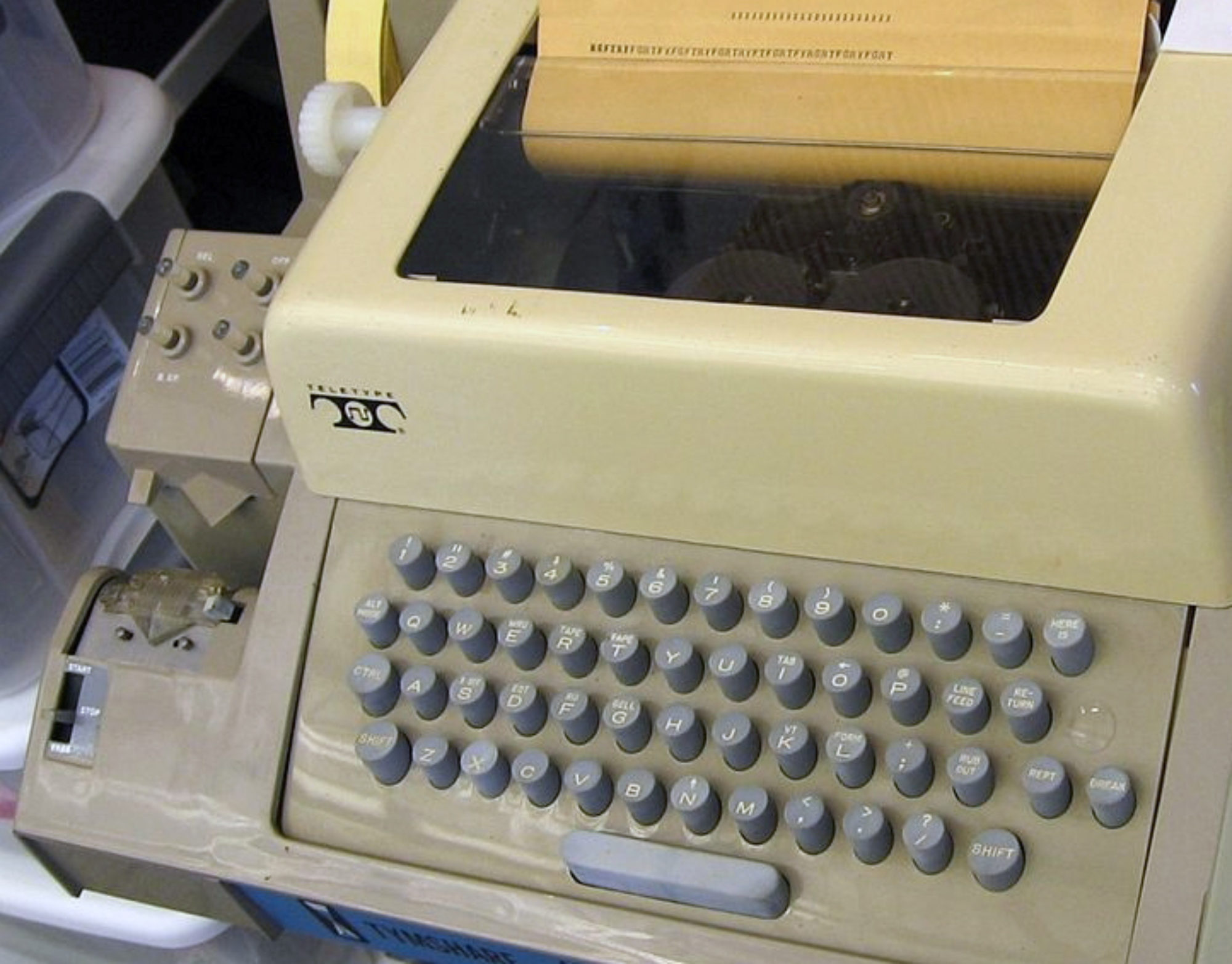

I saw some interesting work on touch programming languages at Onward. You may already have seen Codify. That is still a mostly textual language, as the clickety-click of the keyboard in the video attests. The new research explores how to program entirely in touch, which requires avoiding typing as much as possible.

TouchDevelop is a project at Microsoft Research focused on programming phones. It is fairly conventional semantically. They take a hybrid approach to syntax, using text at the statement level, and structure editing above. Statement editing is facilitated by strong auto-completion guided by pick lists. They make the interesting observation that static typing is a requirement for touch programming so as to enable such auto-completion. YinYang, also from Microsoft Research, is aimed at tablets and explores an unconventional semantics of events particularly well suited to games and simulations.

One might scoff at the toy-like nature of these languages. But remember that most of the major innovations in computers have started as toys. In fact touch might be the only way to eventually liberate programming from text. Frankly, the only way to get most programmers to stop using text editors will be to take away their keyboards. On the other hand, I saw a bunch of people at SPLASH/Onward using iPads with keyboards.

Programming on the small screens of phones and tablets also forces you to drastically simplify the language, offering yet another virtue from necessity. I’m liking the way this is going…

Not if the objects are instantiated while you’re programming…

Codify looks quite fantastic. A Hypercard for the age of touch? The homepage says that the Lua programming language doesn’t rely too much on symbols; but I didn’t understand why that is. Looks like it’s still about strings of text to me… ?

Good point, Lach. TouchDevelop is a conventional “dead” programming environment. Sean McDirmid worked earlier on live programming but I am not sure if YinYang is.

Lua being “symbolic” is just marketing BS.

YinYang is not live yet,. Its definitely on the todo list but I’m looking for a new twist.

Totally! Having played with Codify for a couple of days my first impressions of the programming environment are that it is extremely awkward to edit text on the iPad, so programming is very slow and error prone. Being a long-time programmer, I’m happy reading a purely textual representation of the program, but I want the editing gestures to edit the program itself (the abstract binding graph), not the characters of the textual representation. I guess I’ve been spoiled by IntelliJ, which lets one program largely by performing program transformations and lets you drop down to text editing as a fallback.

>>forces you to drastically simplify the language, offering yet another virtue from necessity

So what do you think of Haskell?

Most touch based and new successful graphical (and touch) languages seem to be created for game/graphical development. I’m curious what can happen with a more generic system. For instance http://waterbearlang.com/ based on Scratch seems to be well suited for touch devices and it’s not hard to make a DSL using it for anything. The environment would need to be more polished (it’s kind of crappy now), but it has potential. With this kind of system, you can introduce all kinds of IDE helpers which we would need for touch programming.

One thing that is missing from current systems (correct me if i’m wrong); with strong typing like Haskell or something, it should be dynamically possible to give you, mostly anywhere the methods/classes you ‘probably’ need. So a lot of intelligence directing at showing you what you probably need at that point, based on the variables available at that point (which you know in Haskell anyway). For instance, in Waterbear/Scratch you could sort the possible draggable element so the ones that ‘fit’ where you last worked on are on top, so you don’t have to clumsy scroll on your iPad usually. And to fit you can use shapes and colors which are your ‘strong typing’.

I do see some help at the moment in programming for voice recognition, for instance variable naming and variable values, because obviously I don’t want to type them on the clumsy keyboard those touch things have.

SIRI type stuff might have it’s use in programming though; there is a demo by Wolfram where he demos a kind of casual (not talking but typing, same thing though) programming http://blog.wolfram.com/2010/11/16/programming-with-natural-language-is-actually-going-to-work/. I don’t really believe that will exactly work nicely, but the combination of touch and speech should work fine imho. I have the very strong believe (as you can see with Codify) that all efforts which involve typing mostly anything on the screen itself is doomed to fail. Messing up your visual workspace with that on screen keyboard is enough to get me out of the zone and thus disrupt my coding efforts.

Drag and drop programming is incredibly painful from an efficiency point of view. You are correct that they utilize context poorly, but they also utilize space very poorly and require lots of RSI-inducing movements. I believe some kind of menu-ing will work better, it is much better at taking advantage of context while you can prioritize choices more easily also. Now all we need is a probabilistic type system…

Dialogue-based programming systems are a bit tricky and I don’t think they will be ready for awhile. However, check out Mozilla’s Ubiquity as a system, while Christina Lopes’ Naturalistic programming paper talks about issues in this direction. At any rate, I don’t think speech will be faster, but it should have uses; e.g., when searching for a symbol by name.

I definitely didn’t want to imply speech is faster for programming itself; I really see it as helping to ‘name’ symbols. It would be faster than typing generally, but only for ‘new’ naming and search (maybe), the rest should come from context.

Thanks for that paper (http://www.ics.uci.edu/~lopes/documents/oopsla03/index.html), I hope I can find more papers about the subject.

I agree with you that for a lot/most of programming drag & drop is actually quite horrible, but i’m searching for a solution which would work well on pads as that means it would work on the move. So no keyboards. And I truly see no solution where you have to type, not even 1 word. I have tested with open source speech recognition and for variables it seems to work.

If you create your ‘DSL’ on an actual ‘old fashioned’ keyboard/computer, then I do see it happen that you develop complete apps with drag & drop + speech. For gaming this is working already in some desktop apps; Gamesalad for instance requires very little typing as DSL for games; it would be easy to move that to a tab interface. Hook up speech for symbol names and it would work quite well. Of course it should be seen as a DSL, as you wouldn’t want to write generic systems that way. For generic programming I don’t see that happening, but I guess that’s what Jonathan and your interest is.

I feel far behind there; the language which I see as interesting for this work is Haskell as it is purely functional and you can reason on a meta level about it which means you can have a lot of assisted programming going on. Where the ‘drag & drop’ and speech recognition come into play, however I don’t believe that will work with ‘basic’ Haskell, however with a DSL (or just a library), this would work well I believe.

Anything ever attempted for a full language, not a toy language? Considering all I saw so far are DSLs (and usually toys to boot).

If you are interested in Haskell, then check out Conal Elliott’s work on Tangible Values (Tangible Functional Programming, ICFP 2007).

I think we should start with DSLs and toys and try to expand out to general purpose programming. Many of the issues are orthogonal, but a high-level programming model is also useful in reducing the amount of code that needs to be written (very useful on a tablet).

Are you aware of research on context assisted IDE hints (I don’t know the proper name for it)? I never understood why IDEs are not smarter about that; modern environments know so much about the context you are working in, why not give more hints during coding. Computers are fast enough.

RFP + context hints would be a step in the right direction I think? People became used to continues feedback and yet IDEs are not really progressing towards that much it seems.

Try Greg Little’s chickenscratch work and his paper in oopsla for similar code search in java.

IDEs are not getting much smarter because our languages are only so analyzable, in real time or otherwise. Even Haskell throws you into a sea of types but those types still don’t express very much about intent needed to assist developers! I believe we need to design new languages that have better IDEs stories, which is achievable if you design them together (the editing experience is a part of the language).

I messed up on my last post. The paper to read is Greg Little’s “Keyword Programming in Java” at ASE 2007, while Chickenfoot is the other MIT project that I think influences this work.