There is one gigantic problem with programming today, a problem so large that it dwarfs all others. Yet it is a problem that almost no one is willing to admit, much less talk about. It is easy to illustrate:

Too goddamn much crap to learn! A competent programmer must master an absurd quantity of knowledge. If you print it all out it must be hundreds of thousands of pages of documentation. It is inhuman: only freaks like myself can absorb such mind boggling quantities of information and still function. I wager that compared to other engineering disciplines, programmers must master at least ten times as much knowledge to attain competence.

The tragedy is that this problem is not inherent in the nature of software: it is largely our own fault. There are many reasons for this tragedy but as usual in such societal failures they boil down to the fact that the people with the power to change things have a vested interest in not doing so. But that is a topic for another discussion. For the moment I just want to define the problem.

I conjecture that in order to fix the problem we first have to be able to quantify it. Everyone gives lip service to simplicity. Then they use C++. We need an objective measure of what makes programming hard in order to hold people accountable and make progress. I want to propose a simple approximate measure of programming difficulty:

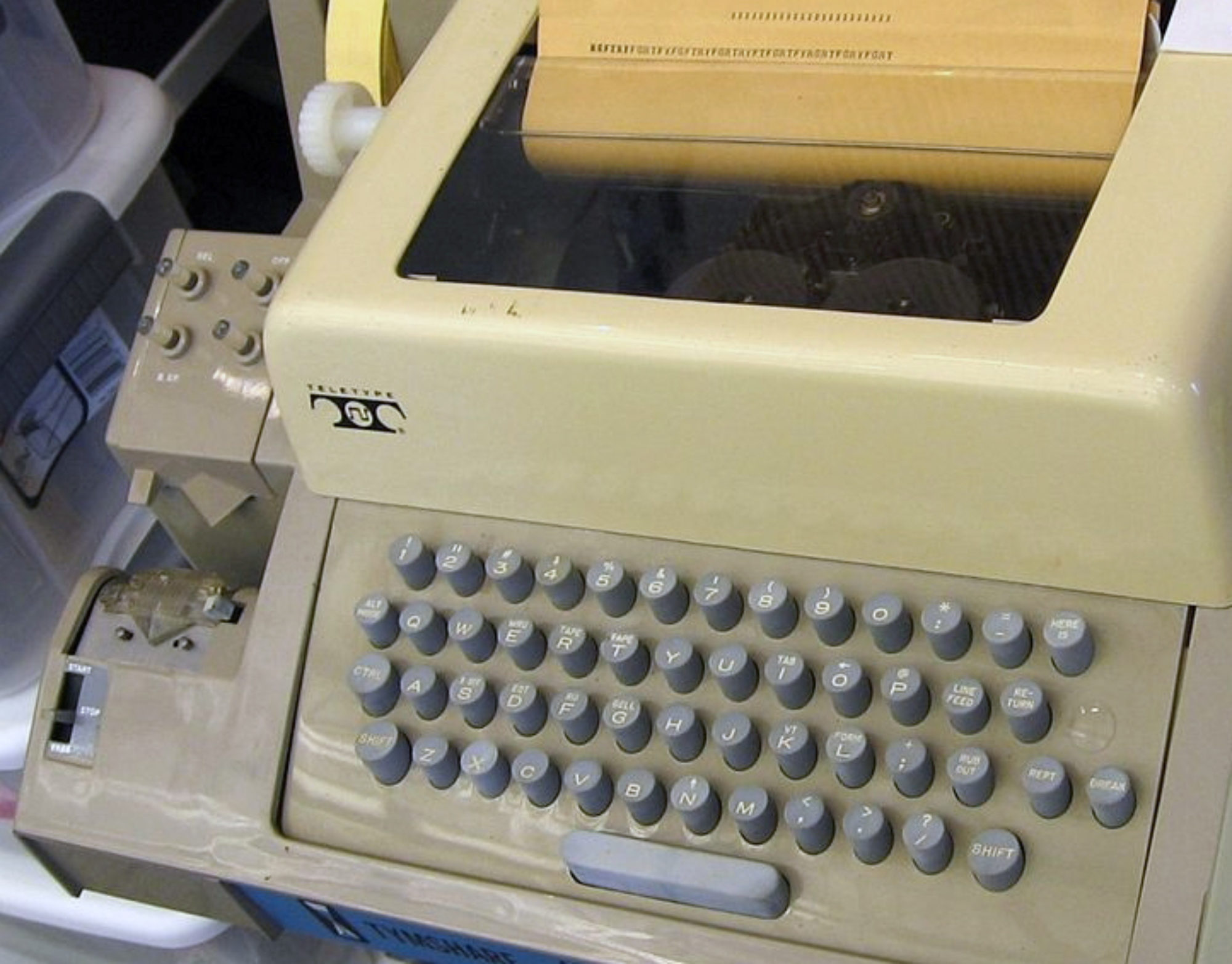

Difficulty = pages of reference documentation

In other words, the height of that stack of manuals pictured earlier. Let me qualify this definition a bit. This measures the complete documentation of a programming technology for programmers to fully understand and use it. Not tutorials. Not guides. Not code comments.

Clearly this is an imperfect approximation. An average page of the C++ manual contains a lot more knowledge presented a lot more more obscurely than an average page of the Lua manual. So the nominal 12x page ratio underestimates the actual difference. On the other hand, regular expressions are documented very precisely and concisely despite being tortuous to use. Nevertheless I want to suggest that on the whole documentation size is a reasonable approximation of how hard it is to program. More importantly, it is the only such measure I know of. I welcome better alternatives.

I have found this metric quite helpful in my own work. All designers agree that simplicity is crucial, but how do we tell when we are in the trenches fighting with different designs of a language or API? Thinking about documenting the design to its users is a good way to clarify things. Whatever makes the documentation shortest is the best.

Perhaps this metric could also help solve a big problem with computer science research: simplicity is not a result. We can’t publish papers claiming that one language or tool is simpler than another, because there is no “scientific” way to evaluate that claim, other than empirical user studies which are essentially impossible. That is why CS research focuses on hard results like performance and theory. If researchers could accept documentation size as an approximate measure of difficulty we might be able to at least discuss the issue.

But none of that really matters. What really matters is what this metric tells us about the future of programming. By far the most effective thing we can do to improve programming is:

Shrink the stack!

I am talking about the whole stack of knowledge we must master from the day we start programming. The best and perhaps only way to make programming easier is to dramatically lower the learning curve. Now hold on to your seats, because I am going to break the most sacred taboo of our field. To shrink the stack we will have to throw shit away. Heresy! No one wants to even talk about it, much less risk their careers on it. Well, not quite no one: Alan Kay has been a lone voice in the wilderness saying this for decades.

What we need is revolutionary thinking. Revolutionary in the sense of paradigm-shifting ideas, and revolutionary in the sense of angry citizens overthrowing corrupt regimes.

Programmers of the world, unite!

You have nothing to lose but your docs.

Are people really in denial over this? I feel like most professional developers would agree. Though I suspect a lot of them don’t think it’s a humanly solvable problem. I’m not convinced either way.

I actually think that the problem IS inherent, partly. Software allows us to make systems that are much more complex than any system we could build without software, which is kind of the whole point of computers. For example, having worked at Google (the ultimate complexity), I think (a) of course Google’s software could be much simpler than it is, but (b) even if it was all optimally simple, it would still be much more complex than any existing non-software artifact, and understanding it all would be effectively impossible even for the smartest humans.

That said… yes. Make it easier. Everyone, throw shit away. Please.

I really do enjoy reading your blog.

Your words above are correct, but your reaction, when confronted with simplicity, belies your beliefs. I’ve been faced with this kind of reaction for a couple of decades. Paul Morrison has withstood it for even more decades.

Here’s a diagram compiler written in FBP style (i.e. self-compiling), using 800 lines of code (600 CL, 200 C):

https://github.com/guitarvydas/vsh

Gotta go watch game 7…

To shrink the stack is an admirable goal, but not easy to achieve or judge. It is easy to get trapped: to develop a system that seems simple but supports a few common use-cases but increases complexity for everything else. Further, heterogeneity – of protocols, of data models – is natural, a consequence of contextual optimization and immediate convenience and avoiding lossy conversions. And so there will always be integration challenges between subsystems with different stacks.

I’ve been thinking about this problem for ten years – how to lower the bar for programming and open systems integration, to make manipulations of one’s ubiquitous computing environment or augmented reality or video games into a common experience. One must ask not only “how do we create systems that scale to a million nodes” but also “how do we create systems that scale to a million programmers” and “how can we create systems where individual programmers can join an active system, explore, and grow in capability, responsibility, experience, and valid intuitions”.

My best answer, so far, is exhibited in my RDP model with its strong focus on compositional reasoning, resilience, staging, compositional security, collaborative state models and resource control. But even RDP doesn’t achieve all the properties I desire, e.g. with regards to exploratory operation.

Call-Return (implicit synchronization) is a deadly cancer. Dijkstra got it wrong. GOTO was not the problem.

Get rid of this shit.

OO is a cancer. It obscures control-flow. (Kay used the right language, but missed the mark, OO doesn’t do “message sends”, it’s all deadly synchronous).

Get rid of this shit.

Functional thinking is a cancer. Nobel laureate Ilya Prigogine spends the first 100 or so pages of this book http://www.amazon.ca/ORDER-OUT-CHAOS-Ilya-Prigogine/dp/0553343637 explaining how “functional” thinking derailed physics for a century.

Get rid of this shit.

The concept of “processes” is an epicycle layered onto call-return to mitigate the damage. One can tell that it’s an epicycle because of the fact that everyone thinks that processes are “hard” (including Liskov http://www.infoq.com/presentations/programming-abstraction-liskov )

Get rid of this shit.

What’s left?

Asynchronous components.

One-way events. (IP’s, as JPM calls them). Events that travel down “wires”.

The concept of Time.

Simple.

FBP has been used to build banking systems, real-time embedded systems, POS, and desktop IDE’s.

http://www.jpaulmorrison.com/fbp/

http://www.visualframeworksinc.com

http://www.amazon.com/Event-Based-Programming-Taking-Events-Limit/dp/1430243260/ref=sr_1_1?s=books&ie=UTF8&qid=1368489484&sr=1-1&keywords=event+based+programming

http://noflojs.org/

http://en.wikipedia.org/wiki/Bourne_shell

http://www.flowbasedprogramming.com/

(oop, back to the game).

Re: what’s left – there is also synchronous concurrency (cf. cellular automatons, discrete event logic, temporal logic, synchronous reactive programming), and explicit logical synchronization.

And dataflow (direct modeling of time-series data) is a powerful alternative to events (modeling diffs/edges in data). I believe events are problematic for lots of reasons, that they don’t scale nicely.

Effective modeling of asynchronous systems is a must, but I’d prefer to model it explicitly (i.e. using intermediate state) rather than the asynchronous-by-default of some programming models (e.g. actors, FBP). Asynchronous-by-default creates a lot of non-essential complexity in terms of state management and non-determinism.

To rant against ‘functional reasoning’ in programming because it derails physics seems to assume that a good programming model should create the same challenges for programmers that physics presents for inventors/manufacturers. Let me know if you’re ready to add fans or heat-sinks to your FBP programs. Otherwise, accept that programming, and reasoning about programs, should probably be easier than physics.

(reply to myself, in order to preserve screen real-estate)

David,

You and I appear to have a low-level communication problem. Certain words seem not to connect.

It could be that the word “event” has morphed, unnoticed, underneath me over time. Certainly no one at DEBS2007 (distributed event based systems) understood what an event was (well, from my perspective ;-), so let me try to be more explicit:

I use event in the ’70’s hardware sense – a pulse (of data). Pushed, not pulled. In my book, the first event in a chain (flurry) of events is a hardware interrupt. The interrupt handler reads the data from the port and rebundles it as a small struct and shoots it down a “wire” (represented by a table, or something).

Morrison uses the word IP (information packet). In his world (big iron), he expects a continuous, back to back, flow of “records”, hence, bounded queues.

In my world (bare iron), I expect pulses of small data with large (in cpu-terms), unpredictable gaps between themm, so I continue to use the word “event”, and use unbounded queues.

The difference between FBP and other message-sending systems is the concept of an “output port”. A component is snipped at both ends – its input and output interfaces are explicitly defined. Components are well-encapsulted – they cannot see (name) the receiver of their output events. Only the “parent” component can wire its children together.

This property gives: hierarchical composability, the ability to move components around (rearchitect) (https://groups.google.com/forum/?fromgroups=#!topic/flow-based-programming/ee5uTVh4Bhk), encapsulation, specifiability, testability, integration before the code is written (one can compose and check the architecture / integration before the inner code is written), great scalability, multi-coreification, reusability of architecture (cut/paste diagrams) and – the ability to draw semantically concrete, compilable diagrams (“self documentation”).

This is where message-based systems (CSP, OCCAM, Actors, Erlang, Mitel’s original SX2000) fail – they use spaghetti messaging, they allow the senders to see/name the receivers (breaking encapsulation).

Hierarchical composition is to messaging as was structured programming to spaghetti programming.

The meme of this thread is to “throw stuff away”. FBP does just that – what’s left is boxes and wires. My bare-metal version adds bubbles and arcs (for state diagrams inside the leaf-boxes). The count of the full set of diagram symbols needed for this is under 10 (not counting the symbols in the textual expression language which is used to annotate transition arcs).

And, surprisingly, the result is immensely usable. A large class of problems (e.g. protocols) becomes “easy” to solve.

(Even if RDP turns out to be “better”, FBP “should be” used for programming everything in the interim :-).

As for functional, Liskov agrees with me, in the above-pasted video. But, I was just using it for metaphorical effect (I also study songwriting). Kind of a “Snap out of it! Everything is on the table, everything should be considered for the discard heap”. We need a Peter Walsh “Enough Already” cleansing of the clutter that has accumulated in this field. FBP concretely proves that this is possible whether or not you agree with its design choices.

I strongly support Jonathan’s efforts along these lines.

I agree that FBP has a lot of good properties for simplifying systems, and that the hierarchical composition / external wiring (rather than ‘spaghetti messaging’ – nice term) is certainly among those properties.

FBP has weaknesses, though, e.g. with respect to reasoning about effects on shared resources that exist external to the FBP diagram (such as the filesystem, network, database, sound system, UI). FBP’s also a little weak on open composition, i.e. the ability to observe, modify, or add features to an existing component without invasive modification. Of course, procedural/OOP/functional have a lot of the same weaknesses. But I think we can do a lot better. Exploring how led me away from events and implicitly asynchronous programming.

Your 1970s idea of ‘events’ is not so different from mine. You use the phrase ‘pulse of data’ but what is a pulse if not a blip, a meaningful edge, in a continuous stream that happens to be predictably boring most of the time. And the meaning of a pulse is often the same, describing or effecting a change in data. Even if there are some subtle differences in concept, I believe the majority of the list of issues I described for events will still apply.

I agree that the notion of ‘process’ is problematic. And I’m not going to argue that functional is great, only that your argument against it was not great. 🙂

:-).

I don’t need great arguments. I just want to stop people in their tracks and have them re-examine their crazy base beliefs. (Songwriting, dude).

“FBP has weaknesses, though, e.g. with respect to reasoning about effects on shared resources that exist external to the FBP diagram …”

Apparently, I’m not making myself clear.

There is just about nothing that is not on my bare-metal FBP diagrams (“BMFBP”, I guess).

My diagrams show the interrupt handlers. File system, UI, network, database – all implemented as BMFBP diagrams. The whole kit-and-kaboodle. A little cruft at the edges – a few (literally) lines of assembler to read a hardware port, then turn interrupts back on and repackage the data into an “event” struct.

BMFBP is a hierarchical diagram of a (or a set of) device-driver(s) that get things done. No O/S required.

Hey, there’s another concept to get rid of! In the ’70’s we all thought that the O/S would be the uber-library that would provide all of the functionality we needed to build simple apps.

Instead, we can just build simple apps using diagrams and throw the O/S out with the garbage.

“FBP’s also a little weak on open composition, i.e. the ability to observe, modify, or add features to an existing component without invasive modification.”

I probably don’t understand your language at this point…

BMFBP can roll out changes on a per-component basis. If you define the inputs and the outputs of a component, you can simply replace the component with a “pin-compatible” one.

You can re-wire an architecture by replacing a “parent” component (what I call a schematic – the one which supplies the wiring between its children).

This was a key element of a Y2K fix for a major bank.

The furthest we took this concept, in the field, was to download updated components, then reboot and rewire. I believe, but have not proved in practice, that it would be possible to hot-rewire components by simply pinching the queues, reload, then release the queues.

I’ve been skimming your readme (the first time I’ve seen anything concrete about RDP). We’re not far apart in thinking. You want to use functional behaviours which connect with ephemeral resources and signals.

I want explicit – and diagrammatic – control of all 3 of those things. And, if I have to dork with state, that’s OK with me, since I learned how to do that in EE class – hierarchical state machines, gray code, race conditions, etc.

It’s simple.

BMFBP sounds fascinating.

But representing resources in the diagram is not the issue I was describing. Rather, the issue regards “reasoning about effects on shared resources” – for example, where a few components may write a file “foo.txt” and a few components may read it. And while I suppose you could copy-and-paste filesystem implementations represented as diagrams, you certainly cannot do the same for a network or Internet; ultimately, the resources that concern us are external to any FBP diagram.

There is, of course, the possibility of representing the shared filesystem as a diagram external to every other diagram that interacts with it, with potentially massive fan-ins and fan-outs. The cost is effective encapsulation of filesystem effects and feedback.

Anyhow, I like the idea of being rid of the OS concept. Though my preference is instead for applications to operate on a constrained abstract API, of which the browser’s document object model is an example (though I’d prefer a non-imperative API). We can then compile to multiple concrete targets.

The ability to “roll out changes on a per-component basis” describes update, not open composition (aka extensibility). Update typically has an authoritative source (i.e. the upstream component providing the schematic), whereas extension is something other agencies can accomplish without control of the primary schematic. Upgrade vs. pluggable architecture. Update has its challenges, of course – persistence, loss of data or partial work, atomic update of multiple components. And extension has its challenges – security, robustness, consistency, resource control, priority.

FBP has evolved a few ways to handle update, though it doesn’t address the issue of data-loss very well (developers must simply be disciplined, avoid long-term use of local state). But I’ve never seen an FBP that handles extension effectively.

Download a couple of new parts.

Download a modified top-level schematic (which is also just a part).

Hit reset.

Newly extended application runs.

Does that not meet the criteria for extension?

It does not. You are relying on invasive modification regarding the new top-level schematic. You are still relying on centralized authority, for the download and reset button. The composition process you describe is a ‘closed world’. It is not ‘open’ composition or extension.

Please, continue.

Haxe has a pretty good power to documentation ratio 😀

Is it really true that other engineering disciplines have fewer “prerequisites” to get started doing Real Work? It at least seems clear that many more high school students become accomplished programmers than chemical engineers. There’s the issue of availability of required equipment, but it’s not obvious to me if there isn’t serious background knowledge required for the classic engineering disciplines, and it just happens to be more favored by the educational establishment as content that “everyone” should learn (e.g., basic calculus, chemistry, physics, …).

I’m ready to believe, though, that the voluminous background knowledge of other engineering disciplines is more “fundamental” somehow, based more on the physical world and less on accidents of tool design.

That’s a good question, Adam. This idea crystalized for me in a presentation from the Mech Eng dept about their curriculum (my daughter is entering in the Fall). Mech Eng students must really learn a lot, indicated by the stack of textbooks they must master. But these textbooks remain largely valid and useful throughout their careers. Whereas in CS what career-long textbooks do our students get? CLR (algorithms and data structures) for sure, but not much else. On the other hand, I am betting that we find the opposite if we compare the quantity of day-to-day working knowledge that practicing mechanical and software engineers use.

What is great about a metric is that we can answer such questions by doing experiments. I am thinking of getting a UROP to do just that. Interested?

The difference becomes clear when you separate ‘hard’ from ‘bloated’. Take physics. Quantum mechanics is hard, but can be described on one page. To understand it you need to read a book. The same goes for a subject like calculus. You often see ~1000 page calculus books, but calculus too can be described on one page. In the mind of a person who knows calculus, it has a compact representation. The 1000 page book is full of explanations and exercises to build up that compact representation and the skills to use it in the mind of the reader. This is not so for the C++ standard. There is no compact representation of the C++ standard even in the mind of the most brilliant C++ programmer. That doesn’t mean that the representation in the mind of a C++ programmer is quite as bloated as the written down standard. For example the type checking rules have some redundancy with evaluation rules. The type checking rules are constrained by the evaluation rules. So the knowledge can be compressed, but nowhere near as much as in a subject like calculus or quantum mechanics. A lot of incompressible knowledge remains because the C++ language design does not follow from a small set of principles, but rather from a very large set of decisions made by a design committee.

It doesn’t have to be that way for a programming language, we already know that Scheme isn’t nearly as bloated as C++, and a core language that’s even more strictly based on lambda calculus can be smaller still (e.g. a dependently typed language).

On the other hand in many areas we’re stuck with a very bloated system (e.g. the web), and in some cases we don’t even have a compact alternative. Alan Kay is doing great work to fix that by building compact alternative for the full computing stack from programming language all the way up to word processing and an alternative to the web.

>Difficulty = pages of reference documentation

An interesting statistic. Thomas Sowell’s book on economics talks about a similar simple but effective statistic when discussing the effects of rent control. They measure the commute time of police officers as a measure of city unafforability. All cities have police, and commute time is a simple metric to gather.

The beauty of the Oberon OS was that things are simple if dealt with at the right level.

Software that I’ve encountered that is Simple (by J.E.’s criteria and by my (unspecified) criteria):

Lisp 1.5

Smalltalk blue book

Forth

Tiny Basic (DDJ)

Unix V7

K&R C (simple != elegant 🙂

forks, pipes

OCG (orthogonal code generator, Cordy)

SSL (syntax semantic language – R.C. Holt et al)

Fraser/Davidson RTL (pre-gcc)

PEG (parsing expression grammars)

Rob Pike

Small C (DDJ)

Ratfor and Software Tools

factbases (bags of triples w/o xml syntax)

diagrams (concretely compilable)

the SubText demo (don’t know how complicated it was under the hood, but it was simple externally)

solving one problem instead of a “class” of problems (after having learned simplicity 🙂

Your metric goes against Einstein’s law of ideal simplicity: “everything should be made as simple as possible, but no simpler.” Ok, I made that up, but its important to not go overboard on our simplifications: other factors like performance, flexibility, or just power are important also.

Your other point on shrinking the stack is really about diversity and the failure of natural selection to remove obsolete or ineffective technologies fast enough. I honestly don’t think this is a problem: yes there is a lot of crap out there, but we, at least, can ignore most of it; there isn’t a recognized crisis in practice and only unrealized potential. Also, consider that we are still a very new field that keeps growing exponentially. Its bound to stay messy for another hundred years or so.

Finally, I’m a big believer in organic methods. Something will be complicated before it becomes simple; its hard to design something that is simple right off the bat (I’m constantly simplifying my code…should have paid more attention to Worse is Better). I think as long as we keep trying at it, things will gradually get simpler only because our experience and knowledge has increased.

I have to disagree Sean.

We are so far from going overboard on simplicity that we haven’t even boarded the boat yet. Yes we are a youthful field, and like youth are intoxicated with the thrills of performance and expressive power. Performance and expressivity are counterproductive in 90% of programming. Think C++. Our entire field is one giant premature optimization.

We can’t rely on organic evolution to naturally lead to progress, because software is not physical: it doesn’t wear out and it has no cost of production. Every other engineering discipline gets to evolve in the normal course of building replacements. If you have to build a new wheel, you might as well incorporate what you learned building the last one. But we are still using the very first wheel ever invented, because it never wears out and it would cost something to replace it. The one thing we don’t do enough of in software is reinventing the wheel!

I think both you and Sean have good points. There are quite a few cases where software does evolve, e.g. when the software itself is a towering monument (e.g. video games).

But there is also a lot of infrastructure that does not evolve so easily due to various network effects or virtuous/vicious circles and other equilibria. Organic optimization methods tend to stabilize on local minima or maxima. If we constantly simplify relative to the existing stack, that’s all we’ll ever achieve.

Even in the case of video games, it doesn’t evolve as quickly as you might think. Games still contain code that was written 20 years ago, and I’m not talking about a low level library or anything, I’m talking about actual game code like saving the game state.

But yea, the real problem is where there is some kind of social lock in, like in network protocols and things like the web.

Perhaps we are talking about two things: one is diversity and the other is flat out complexity. Let’s just ignore diversity for now and assume there is one true stack to learn. Pretending we are a middle manager, we are not confident about our devs and will pick whatever appears simplest…we are going LCD (least common denominator). Capability is not as important as minimizing risk, but we might not deliver the product that we need to. Worse is better, except when it isn’t.

Software rots: it doesn’t do what we need it to do, and it can’t be upgraded very easily; the people who wrote it left the company, the documentation is nonsensical. Sure, there are systems still running from a long time ago, but they pale in comparison to new systems. I would bet that the average age of code in production is fairly young given the growth of our industry.

Vernor Vinge’s dystopian future of software archaeologists mining code from the 70s, though a cool thought, isn’t going to happen. Code simply isn’t that robust. We will continue to improve and simplify our industry slowly until the deep neural nets take over and make us obsolete anyways.

Working with big banks in my previous life left me less optimistic. There’s still billions of lines of COBOL production code. There’s a healthy market for 3270 and ANSI terminal emulators to hook up to ancient mainframe and unix systems. You can still run DOS programs in Windows. I guess the average age of a line of code in a corporation is at least 2/3 the age of the corporation.

it doesn’t matter if the average age of a line of code in one corporation is 2/3 the age of the corporation. If we are talking about the entire industry, lots of companies are dying and starting up, and many are expanding with new software projects. So the base might remain, but becomes increasingly insignificant over time.

Consider that the new.ycombinator.com world is completely disjoint from the enterprise world. They definitely have their own complexity issues, but legacy is not one of them. Or consider what post-PC means: the PCs are not going away, its just that a new market is growing quickly around them.

@JE Interesting point, re. reinventing.

It got me to realize that that is what’s happened to me. After every consulting contract I finish, I walk away only with the ideas, the IP is signed over to the customer. I’m on about the 7th version of an FBP “kernel”(s). V2 and V3 had 2nd-system syndrome, subsequent versions had progressively less cruft.

This was also noted at Cognos during the Eiffel project years. They found that a class had to go through 3 iterations before becoming “good” (reusable, “general”).

One way you could view it is to assume you have a super-computer which can do anything, you are therefore a God, what would a God use to control his realm?

Really the problem is that we are God’s over the bit, the 1’s and 0’s, but we don’t have a mature solution for this realm. Assuming we are God’s, not programmers, view it in this way, the solution might appear by changing the initial assumption.

I better give an example for my point to make it more meaningful, normally when we program we start with a class and build it up in features until it resembles what we want. When we want to program a “cat” object or a “dog” object, we build it up for the features we want.

What we could do is start with a class which has already all features written, a super powerful class for “cat” which can do anything a cat could do, it can render in 3D or 2D or text, it can run, stalk, hide, anything. What you do then is you cut the class down, rather than building up.

You start with classes which are all fully programmed and cut them down to a smaller feature set which resembles what you want. This would require some people to write all these classes in the first place.

It would be easier to cut an object down to what you want than building it up from nothing, starting with a large something and making it less capable and more specific is easier than starting with nothing and making it more capable.

So… you’re interested in constraint programming?

Hi David, not sure that we have the same understanding, constraint programming is still a process of building up the program from constraints and finding a solution to a problem given the constraints.

I’m saying, why not start with code which has already been written and cut it down to what you want – ie, imagine if there was a cat class which is very large and written to work with every other API, with OpenGL, text inputs and outputs, and it can do anything a cat can do. It can render to OpenGL in 3D with fur.

So you want to make a cat in a 2D game, so you turn off the features you don’t want, you turn off the 3D support, you turn off its ability to move in every direction and make it constrained to 2D directions (N,S,E,W). You turn off all the features you don’t want, then you end up with the cat you need for your computer game.

The idea here is to start with a large thing which can do anything and everything and cut it down until it meets what you need. Rather than what we normally do which is to start with nothing and API’s and built it up until we hit what we need.

If you take this idea further, you could consider a object which can do anything and you take this super powerful object and cut it down to what you want. Start with anything to get to something rather than starting with next to nothing as we do and building up to something.

Here’s an analogy, people are like objects which can become anything, a person can pretend to be a cat, a person can pretend to be a car, a person can become anything. A person doesn’t start with nothing and add behaviors, it starts with a large knowledge of objects and makes its behaviors specific for the context. Actors do that, I mean actors in movies and plays, people do this for work. We are like super objects which can cut down our behaviors to a smaller set.

FWIW, you’re describing the process of songwriting. Pat Pattison (Berklee) points to the bus scene in 8-mile. Eminem’s worksheet can be seen for a moment. It is loaded with lines / phrases / ideas. You use various brainstorming tricks to build up a large inventory of related material, then prune to reveal the final song. (Sculpting has been described that way, also – removing the unnecessary bits of stone).

Or, ss Basset’s frame technology what you have in mind?

http://pdf.aminer.org/000/214/693/buffer_schemes_for_runtime_reconfiguration_of_function_variants_in_communication.pdf

There’s some similarity, but what I’m suggesting is that all code has already been written, programmers should only need to cut down to what they want. Billions of lines of code has already been written for everything which could exist, we just cut it down to what we want. That’s in line with the first idea, assume that we have an infinitely powerful computer.

sorry replied in the wrong place

Many ‘young’ people start (and end?) like that. I know quite a few ‘programmers’ 16-25 who actually do not really understand what they are doing (here I mean; they don’t know what arrays are, don’t really understand for/while loops, have a very basic understanding of variables, no idea at all about recursion, generics etc), but they just use a -google, copy/paste, chat- loop to write real world corporate and consumer software. I know at least one programmer who is in his sixties who has been doing this (starting with BBS’s) for almost 30 years; his code looks abysmal, but it works and he is making a lot of money for most of that time selling it.

You would think if you had some kind of Watson tuned for this purpose (with software analysis tools built in to make the ‘glue’ automatic), it would be capable of combining these ‘snippets’ automatically to something which satisfies the constraints without actually having a much of a clue how to actually write software.

Have a look at Go. The spec is merely ~45 pages long. Compare that to the 1200~1300 pages that make up the C++ spec. I know it isn’t the perfect language. I just feel it’s simpler and better than C/C++/Java that has dominated my programming past. It’s a relatively new language (It’s from 2009) and what I love about it is that it has dared to throw some stuff away (like classes, templates/generics).

Webpage: golang.org

Specification: golang.org/ref/spec

Tour: tour.golang.org

Haskell’s report is roughly 250 pages. ghc’s user guide is about an extra 250 pages.

And now, every new library you need to use can be very barely documented, as the concise types tell you almost everything you need to know. Just a few pages of documentation per library. The restrictive semantics of Haskell make APIs simple. And API simplicity is probably more important than language simplicity, because you’re going to be learning a lot more APIs than languages.

So, with around ~700 pages you get a powerful language with whole lot of techniques that make programming safe and powerful, and a small ecosystem. With a few extra pages per library, you get a much larger ecosystem for virtually free.

700 pages? In terms of complexity, that is a really low bar for a programming language. In fact very few languages are more complicated than GHC Haskell.

The language itself is complex, because it defines the type language which lets you specify libraries far more concisely.

When I see a new library, I rarely read any docs. It’s enough to look at the API and type signatures.

So we get more complexity in the language, and much less complexity in the rest of the ecosystem.

Out of curiosity, how long did it take you to get there?

I’m in my third year of kicking at Haskell for various personal projects, and the lack of docs beyond type signatures is still pretty painful for me.

Simplicity is important, but it isn’t the only thing that’s important.

For example, correctness, reliability and performance are important too.

The lambda calculus is enough to write all computable functions, and is extremely simple, but that doesn’t make it a good environment to actually program in.

Simplicity is an important criterion, but for my own judgement call, I place safety/reliability above simplicity.

I’d like to point out that this same train of thought has already plowed through the heads of editor writers everywhere.

Everyone knows and agrees that Eclipse/Emacs/Word/what-have-you is the most bloated piece of garbage ever written and would be useful with only about 20% of its current feature set. Then they start talking about it and it turns out each has an almost disjoint 20% in mind.

Not that I’m opposed to the idea of simplicity, it’s an excellent goal, but at extremes it assumes that people and tasks are more homogenous and interchangeable than they are. In particular, your book pic simultaneously gives me the impressions “Wow, that’s a lot of books.” and “Wait, he didn’t stack SICP on that?”

A good approach might be shrinking the applied stack. That is, for any given project you’re working on, minmize the number of components (and hence needed documentation) you use. That way you still need to know a lot, but you don’t have to know a lot to maintain that particular project.

The REAL problem with programming is not any particular language (although OOP is a culprit not a solution in my opinion) but rather how the problem is specified/documented. If the problem is “specified” in the form of a Control Table, it can then also be executed DIRECTLY by a suitable interpreter that can be re-used multiple times. The interpreter can house the machine specific architecture and incorporate “cast iron” library sub-routines. A control table can be designed to be 100% portable (or model specific if necessary). The design of the table should however reflect the problem outcome and incorporate the essential logic – rather than specific API’s or programming paradigms. A well designed generic Control Table could, in my opinion, replace 99% of current custom programming in arcane languages with questionable syntax and readability. The design of the interpreter becomes the one remaining challenge but is really a one-time affair for the particular configuration. A combination of well designed Control Table and (generic) interpreter becomes an unbeatable template for generations of later solutions.

Im surprised no one mentioned Rebol or Red. Their extensive usage of DSLs, natural syntax and small size (<1MB) has already contributed a lot to the "throw away shit" movement

The Rebol/Red community is coming together for a conference in Montreal, Canada — July 12-14. The creators of both languages will be there.

Please come and help to fight against complexity!

Exactly!

For those of you who cannot go to Montréal, there is a chat going on right now on stackoverflow:

http://chat.stackoverflow.com/rooms/291/rebol-and-red

There is also the AltMe tool, traditionally used for chatting within the Rebol and Red community: http://www.altme.com/

Carl Sassenrath, the inventor of Rebol, says these words, right on top of his website (http://www.rebol.com/):

“Fighting software complexity…”

Simply yours,

Pierre

I would also add that probably this is the best reading to start acquainted with the Rebol family of languages: http://www.rebol.com/rebolsteps.html

Do you have any insights as to why REBOL has remained a small isolated community for so long? Is this due to aspects of the language itself or the culture of its community?

I clicked through the Rebol site through many pages trying to figure out what it was, and couldn’t find any code samples; just lots of enterprise marketecture! Why are they afraid to show off their language if it is so simple?

Hi Sean,

I’ll be the first to admit the Rebol web sites are a mess at the moment. We are working on that and that is one of the topics for the conference mentioned above.

As a very brief history, versions 1 and 2 or Rebol were closed source and the development had slowed down a lot. The latest version Rebol 3 is open source (BSD) and is being actively developed and enhanced (although it is not quite feature equivalent to Rebol 2 yet).

Here is a good (though by no means perfect) source of documentation for Rebol 3 with some code examples:

http://www.rebol.com/r3/docs/

My guess is that Rebol has remained small and isolated because of being closed source and being quite a different language. In the many years since its inception the rest of the language community has been changing and nowadays there is a greater acceptance of more interesting and different scripting languages.

For me I picked up Rebol when I was learning EJB (v1) and I finally felt that I could have fun with computers again. EJBs were a true dark age. The expressiveness of Rebol is one of its key benefits. I have never seen a language which so simply allows the creation of very readable internal DSLs. There is a lot to Rebol for such a tiny package (Rebol 3 is still a single executable <1MB in size with no dependencies). You can download the current open source builds at http://rebolsource.net

As mentioned above, come join us and have a chat on the stackoverflow chat room and we can help to answer some of your questions.

All the best,

John

@Sean, http://www.rebol.com/rebolsteps.html is a short showcase what we usually recommend 1st and personally I like the http://www.rebol.com/oneliners.html too.

the urls look better than the site itself… 😉

Please, let us know what do you think after reading these.

The inventor of Rebol is Carl Sassenrath from Amiga. Amiga has failed mostly because of bad marketing. Most of the Rebol community thinks, that’s what was plaguing Rebol too. But since its open sourcing in 2012-12-12, we have entered into a new era 😉

Btw, http://rebol.org/script-index.r is full of scripts. It’s quite an old school site too, but we gonna do something about it at the upcoming conference.

The Rebol corporation—and community surrounding it—tried to implement everything on their own, in Rebol. (The main portal for learning about Rebol was an app that was supposed to substitute for a web browser, downloading live pages that consisted of Rebol. The community chat was done in a Rebol app talking to a Rebol server. The bug database was a program written in Rebol. Even when making a webpage, the webserver was written in Rebol.)

For the most part everyone using Rebol rejected the ugliness of HTML, CSS, and JavaScript…and found a better way. That insularity and preference to fiddle instead of release led to shockingly impressive feats from amazingly factored code. But it also meant being insulated from being found on the web. No one knew or cared how to build decent websites…and everyone was too busy thinking how other methodologies would collapse upon themselves to worry about Rebol’s own Achilles heels (like not having a proper open source license).

Technical things have changed since December’s Apache2 open-sourcing, but the websites haven’t changed. That’s because so far, very few people have been given access to improve them (in rebol.com’s case, no one besides the language creator has access). That needs to be opened up like the source and is something I’m going to be pushing hard for in Montreal: community collaboration on GitHub for rebol.org the way that ruby-lang.org does things!

Thanks for the insightful appraisal. Rebol sounds like a good case study of the difficulties with innovating in software. Simplicity and generality are crucial technically but are secondary issues for adoption. Looking at the success of Rails, being ready out of the box to build production-quality products is more important.

Just to put the size of executable into perspective from a historical point of view. In 1972 I installed a full multitasking interactive transaction processing system on an IBM mainframe model 30 with just 32K of real (i.e. total) memory. Approximately 8K of that was occupied by the entire Operating system – that handled all I/O, including multiple device types. The asynchronous terminal communication code was a massively inefficient B.T.A.M. (that I later re-wrote in a fraction of the code for Shell Chemicals). The transaction processor was C.I.C.S. that I had customized to the basic essentials. This left a shared memory pool sufficiently large to execute complex financial programs (about 4K each) and perform fully addressable screen I/O similar to today’s interactive web pages (but without the fancy graphics). Oh, and upward compatibility? It could still run today on IBM’s latest hardware and software without even a re-compile. The language? – Assembler.

One of the most interesting projects aggressively pursuing true simplification is the work being done by VPRI. See their 2011 status report.

They’re trying to figure out how to rebuild the whole stack, top to bottom, with 1/1000th the amount of code. Some of the work (like Dan Amelang’s NILE) is just astonishing. He’s cut to the very heart of what is means to be a graphics rendering system, and the results are astonishing.

There are two basic approaches to finding these game-changing techniques. Techniques that simplify are found by extraordinary people who “work it out”, like Amelang. Techniques that push the capability boundaries of systems will increasingly be machine-created, exploiting their environments in ways we don’t understand, but delivering results we cannot best.