There was a surprising pattern in the response to my talk during my recent road trip. I think of my work as trying to make programming more human-friendly. Yet the people most concerned with human factors had a common reaction: it just isn’t possible to reinvent programming languages from scratch. I was instead expecting negative reactions from the formal methods and analysis people, but some of them seemed to be quite entertained by my work.

Upon reflection, maybe that divergence shouldn’t be so surprising. Techy types love new gadgets, and I was showing them this weird alien technology. Maybe it will never work, but it’s new and cool. On the other hand, people more attuned to the subtleties of human behavior also tend to be more skeptical about the ability of technology to change the human condition.

An even simpler explanation is that at heart I am still a techy, and we instinctively stick together, and are likewise disdained by those Humanities types. Maybe I haven’t crossed between these worlds as far as I would like to think.

The more humanistic researchers threw the technology lock-in argument at me. One example was standard musical notation, which apparently is quite poorly designed, but which continues to dominate better alternatives through sheer inertia. The other example is the familiar tragedy of QWERTY.

They may be right, but I refuse to give up. I believe that programming is a form of human expression as significant as music or mathematics, and it is important to get it right. I am not a musician myself, but since it takes years of training to become musically proficient, perhaps the effort to learn a new notation is prohibitive. I also don’t hear musicians agonizing over the “score crisis”. Mathematics, on the other hand, has the famous example of the fight between Newton and Leibniz’s notations for Calculus. It is said that Newton’s inferior notation held back English mathematics a century until it was finally abandoned.

QWERTY is certainly a case of lock-in, but it is a hardware standard, and thus constrained by the economics of production. Actually there are quite a few alternative keyboard users at CSAIL, though they seem more concerned with hand pain than typing speed. What stops me from switching is the unavailability of alternative keyboards for laptops. Anyway, typing speed really isn’t a problem for me.

My counterexample to the lock-in argument, which I didn’t think of until I was on the plane home, is COBOL. At one point the vast majority of software in the world was written in COBOL. Now it is hard to find a COBOL programmer under 50, except offshore. Opinions differ on the reason. Maybe it was horribly misbegotten and justly banished, or maybe it got unfairly discarded by a generational shift in fashion. Whatever, COBOL is an existence proof that the dominant programming language can be displaced. All we need to do is invent a new language that is so cool it makes Java seem as archaic as COBOL, and then wait for the next generation of programmers to grow up.

So, in economic terms, the people you meet are using QWERTY as an example of market failure? First, there are economic counter-arguments that suggest QWERTY is not an example of market failure. The crux of the argument on both ends is the fact there’s really only ONE study citing DVORAK’s superiority to QWERTY. Read about it here: http://www.mises.org/story/407

Apparently, musical notation is being used as another example I’m less familiar with. I’m not sure how one critiques the design of musical notation, and probably not the best judge, since I stopped reading sheet music in the 8th grade when other electives became available in high school. However, as much as I enjoy reading Edward Tufte’s comments on design and his ability to argue that one visualization format is better than another, I am leery of believing that there will be a visual programming language that results in *market failure*.

As for displacing programming languages, there are two major forces: Government intervention and private sector intervention. In the private sector, the financial and healthcare industries are what determine the programming technologies of tomorrow. Currently, Microsoft is shifting away from “business architecture process languages” and towards “business architecture capability languages” (Motion). The underlying argument being made here is that a process is not the appropriate level of abstraction. In other words, a process is not a general solution that can be freely adapted. A process does not promote data independence. A process is a physical representation of what’s going through a pipe. A process is modeling the corporate mail room with *physically integrated* fiberboard mailing tubes: we’re stuffing mail in those tubes and that’s where mail room agility starts and stops. On the other end, it’s argued that “capabilities” capture merely the loose structure of how capabilities form ad-hoc information networks, and how to make sure these ad-hoc networks *seem* maintainable to the business end-user. After all, complexity is based in perception.

As for the calculus notation: The notation doesn’t matter. What’s important for students to understand is that by merely making a subtle change to the language’s meaning — for more robust composition — we can do multivariable calculus. Going from a real-valued function to a vector-valued real function is painless as far as I’ve experienced.

Perhaps a bigger gripe in mathematics is the representation for Pi. IIRC, at professor at Utah has a PDF on his web page that hits reddit every few weeks about why the value chosen for Pi has turned out to be suboptimal. I actually tend to agree with this, because it does effect *opertional semantics*. His solution was to create a Pi symbol but add a “third leg”. My solution is mathematicians should be forced to draw this: http://www.angrypi.com/AngryPi.png — still waiting inclusion to Unicode Web Dings. =)

Well, call me a dreamer, but I think that we HAVE TO reinvent programming languages from scratch.

My opinion is based on an observation (aka “reuse” of non-programming paradigms):

All real Engineering paradigms use well-defined diagrams to communicate design information.

The fact that we don’t use diagrams for programming should be a clue as to why software isn’t Engineering…

(Sorry – UML is exactly the wrong thing. I contend that it is entirely possible to use diagrams as “syntax & semantics” for software. If you want to see my attempt at a practical visual language, see the p.s. below).

Music: the “score crisis” does indeed exist and at least one manifestation of it is “The Suzuki Method” of teaching real music to kids before torturing them with musical notation. Another manifestation of the score crisis is the home recording studio. Songwriters can now completely sidestep the notation issue and communicate their ideas audibly. The leader of one of my favourite bands (Ian Anderson of Jethro Tull) has sidestepped the notation issue by using “tapes” for the 40-ish years that the band has been around, whilst producing incredibly complicated music (e.g. 4 or more time signature changes in one 5-minute song).

QWERTY: Wii. Maybe the lock-in argument is only good for small changes? Maybe when a Stephen Jay Gould asteroid hits the planet, we get a punctuated-equilibrium chance to kill off the QWERTYs. Computers are way too complicated and the whole “PC as a generalized computing platform” idea is stupid (for real, non-programmer humans) – so maybe there is a big asteroid headed our way? Of course, a counter-argument to this thought, is the keyboard layout on a Blackberry – sigh.

I am under the impression that universities don’t teach “cool” languages anymore. They teach Java and whatever you need to “get a job” when you graduate. That might explain why interest in COBOL (and all other cool/uncool languages) has faded.

We might get “more interest” in new programming languages if Software Engineers were required, under law, to affix their professional seals / signatures to their works and be liable for them, as is done with real Engineers.

pt

p.s. I very much enjoy the ideas in subtext. We invented a visual language and have been using it to implement commercial applications for a decade or so. I see subtext ideas as a complement to our VF ideas (e.g. as an alternative to the state diagram stuff we use), but always lack the time (/$’s) to explore this avenue further. If you are interested in looking at our ideas, they are loosely gathered at http://www.visualframeworksinc.com.

Sorry for a second post… I just thought of another way to explain why the calculus notation doesn’t really matter. What’s important is the *capabilities* of a particle and its instantaneous rate of change.

Another thought… inspired by Reid McRae Watt’s book, “The Slingshot Syndrome”. I was just having a conversation with a friend about this and it occurred to me that if you want to find the next leap in technology you can often look at what the current market leaders in industry are looking to bury to protect their mature revenue streams. For example, recently, Microsoft buried Visual Fox Pro. There are a lot of businesses who need something to replace Fox Pro, as Fox Pro developers will become scarce. Also, for data-centric applications, many of my older friends who used to be Fox Pro developers tell me that it was just great.

There seems to be lessons to learn from that community, and I’ve been digging around for case studies about the history of Visual Fox Pro, but can’t seem to find any. There is a book that talks candidly about Fox Technologies and the evolution of the company up to being bought out by Microsoft, about what was done wrong and how it could have been better, but nothing about the developer community. The point being that if I want to develop data-centric applications, I should talk to the experienced developers and experts, and that probably means the Fox Pro community.

It’s not at all radical to suggest that people re-invent the wheel AND SO SO POORLY. Just look at Java and EJB 1.0, and persistence problems. The Smalltalk developers on Usenet would tell the Java developers about this thing called “Object-Relational Mapping”, and many Java developers wouldn’t hear it because they were drinking the Kool-Aid from Sun’s marketing team about EJBs, and they considered ORM an untested, radical new-fangled contraption.

Another example in the Java community is the poor architecture of some Swing applications and the misconception Swing gets you MVC for free. However, Swing just allows you to compose applications in an MVC manner — if you’re disciplined enough! There is a framework out there, JStaple, that is part of a larger framework, that basically tries to encapsulate Swing best practices.

“…since it takes years of training to become musically proficient, perhaps the effort to learn a new notation is prohibitive.”

Speaking as a musician, I think the years of training are spent on expressiveness, technique, and control. It takes maybe a couple years to master the notation, but you’re also learning the basics of western music along the way (13-note scale, keys, flats and sharps, etc). I’d guess that learning a new notation might seem harder than it really is. As a programmer, I’m comfortable with Java/C#, Ruby, Lisp, SQL, and XML.

Speaking as a Dvorak typist, the main cost of switching from QWERTY to Dvorak is re-training muscle memory — I imagine that would be a bigger cause of QWERTY lock-in. There -is- the cost of OS/hardware integration, but that’s made trivial on Windows platforms by the Dvorak Assistant (http://sourceforge.net/projects/dvassist). I highly recommend using it over Window’s built-in language switching features. I have only a bit of experience with Ubuntu Linux, but I had no problem switching it to Dvorak.

FWIW, I posted about my experience switching to Dvorak, and my thoughts on QWERTY lock-in: “Dvorak: the Betamax keyboard” http://invisibleblocks.wordpress.com/2006/12/31/dvorak-the-betamax-keyboard/

Z-Bo says “First, there are economic counter-arguments that suggest QWERTY is not an example of market failure.”

Actually, if you look again, the people who deny this are generally claiming that there is no such thing as market failure, ever. This is a much stronger and wider claim. For example, why is IE6 the majority browser, why is .doc the predominant word processing format, take your pick of examples. For them the answer must be: because it is the best option. Anything else means there are market failures and opens the door to government intervention.

People without such strong theoretical political/economic opinions don’t have this answer predetermined for them. This doesn’t make them right, but it gives them less reason to intentionally distort the arguments out of anything other than standard human laziness.

The link goes to a different analysis than the Reason one that usually gets mentioned (http://www.reason.com/news/show/29944.html) but it’s the same kind of thing. The money quote for me is this:

“Microsoft may have wanted to stifle innovation in this case; they were simply unable to do so.”

I’m not prepared to accept economic or technical arguments from anyone who claims that Microsoft was unable to stifle innovation during the browser wars because the market was so efficient in curbing this convicted monopoly abusers behaviour. (Of course in this case the government intervention was even less effective). That’s an even more absurd case of privileging political theory over reality.

Oh, the rebuttal to the Reason article is here: http://www.mwbrooks.com/dvorak/dissent.html

@The money quote for me is this: “Microsoft may have wanted to stifle innovation in this case; they were simply unable to do so.”

The above quote does not appear in the article you linked, or my curl/grep script is defective. Where does it come from? In addition, a quote is not substitute for critical thinking about market failure. The author of that quote is make his or herself an authority. It simplifies debate by making oneself an unquestionable authority. We call that “propaganda” and “yellow journalism”.

As an observation, Microsoft is a free market business, and that means they have competitors.

There are a lot of reasons why IE6 is the majority browser right now, just as there were a lot of reasons why IE4 was hanging around a lot longer than Microsoft expected. I’ll talk about IE4, because history has a tendency of repeating itself, and it’s funny to watch it do so. Back then, in early 2001, The Web Standards Project advocated that webmasters should encourage end-users to upgrade their browsers by detecting older browsers and pushing diagnostic messages to end-users. As you may recall, there were earlier arguments related to this, like Jeffrey Zeldman’s “To Hell With Bad Browsers” (http://www.alistapart.com/stories/tohell/). Substitute “Bad Browsers” with “HMOs” and you would think Zeldman was coming off as righteous and making a political statement. In fact, that’s the correct way to interpret it: as a political statement. The ALA article I linked to and the WaSP’s campaign are lobbyist attempts. (If you are unaware of the technical history of web publishing, then please refer to http://hotdesign.com/seybold/everything.html).

What does this political lobbying capture effectively and what does it ignore? It focuses on standards, because standards are objective and conformance can be appraised. It captures the rationale for the standards, why technological progress is empowering for everyone (implicitly: WHO CAN AFFORD IT), and uses the scientific method to defend political motives. There was push for a shift from the viewpoint espoused by David Siegal in his 1996 best-selling book, “Creating Killer Web Sites”. Not just best-selling technical book, but best-selling book ad judged by Amazon. It was so successful that a second edition was printed the next year! Around this time, the only other book about web publishing getting serious consideration was MIT professor Philip Greenspun’s “Philip and Alex’s Guide to Web Publishing” aka the PANDA book.

These two books greatly influenced the front-end design of high traffic websites. They addressed practical problems and presented practical solutions that worked in the market at that time. Keep in mind that CSS-1 had been around since 1996. However, NO browsers adopted CSS-1 until 1999 (http://www.w3.org/TR/REC-CSS1). Sure, Microsoft’s Internet Explorer started the movement at version 3.0, but Netscape was focusing on different approaches: Dynamic HTML. However, what changed things was this notion of a “document object model” that separated content from presentation, and in 1999 we saw the first roll-out of browsers supporting CSS-1, and CSS-1 has been frozen since then. Note that in 1997, David Siegal issued the famous apology, “The Web Is Ruined and I Ruined It!”, in his article, “Part I: The Roots of HTML Terrorism” (http://web.archive.org/web/20010208104424/http://www.webreview.com/1997/04_11/webauthors/part2.shtml). In that article, Siegal explained quite correctly why this wasn’t an instance of market failure at all, and he accurately predicted DOM was going to be a middle ground solution. Any failures leading up to DOM were technical failures and not market failures. A product cannot be a failure in the market if it doesn’t exist. We call that vaporware. Ideas are good, but it is execution that matters.

Looking at the WaSP’s political agenda in 2001 is revealing. The browsers that supported CSS-1 at the time had only become stable in 2000, so it was only a few months that these browsers’ features were considered suitable for wide deployment, and yet the political lobbyists insisted the best decision was to post diagnostic information to the user that their browser did not conform to current standards. At the time, the browsers an end-user could reasonably upgrade to were IE 5.5, IE5 on Mac, Netscape 6, and Opera 5. At the time, Netscape 6 was not a suitable option because it was merely a dump of the work-in-process codebase of Netscape/AOL, with attention already shifting towards Mozilla 1.0. Due to development failure, Netscape 6 was packaged as abandonware, prima facie. Thus, Windows end-users being told to upgrade had to choose to upgrade from IE4 to IE 5.5. The fly in the ointment to this idea is twofold:

(1) IE5.5 was significantly bloated and slower than IE4. All of these technological innovations that made it simpler for businesses to create dynamic web sites did not provide time for profiling and optimization of the rendering engine. In addition, it would later surface that there was miscommunication between W3C Standards and the IE development team regarding CSS positioning that was only explained by the IE team when IE7 was in develop and no one at the time understood the reasons for. PROBLEM: People on slow computers couldn’t be expected to upgrade to IE5.5. In addition, many websites were still written to support Netscape 4 and IE4. Response to technological change is slow, and as with government, changes in policy face an implementation lag. When the federal government passes a bill that adjusts tax rates, redistributing income, it takes a while for the effects to be felt in consumers’ wallets. When the government of the web, W3C, passes a Standard that adjusts the way web browsers are supposed to behave and designers expectations are supposed to change, there is going to be an implementation lag. This is not market failure. It is merely implementation lag, and is well understood and well-studied. A lot of resources indirectly affected by these changes must be re-allocated, and that takes time.

(2) The critical assumption underlying WaSP’s political movement is that end-users can actually effect their situations. However, most end-users are not administrators of the systems they use. They are merely end-users. Targeting the political campaign at end-users is shortsighted. In addition, the alternative is fund-raising should have been raised for more productive political lobbying by targeting system administrators. However, that alternative is also crippled by the fact these companies typically depended on IE4 and Netscape 4 browser behaviors, and thus many system administrators had no choice but to keep IE4 and Netscape 4 on these systems. When you have a critical information system, you must protect it. (That’s why there are still some COBOL programmers, and why universities like Indiana have offered to become feeder schools to corporations in desperate need of COBOL programmers to maintain legacy systems, to the point where Indiana University actually has courses that teach COBOL in 2007). Thus, these diagnostic messages in many cases were nothing more than a nuisance to end-users unable to effect their situations.

The other problem this political campaign presented is that the idea that these diagnostic messages should be hidden in CSS compatible browsers using display:none is that this idea was in clear violation of accessibility standards.

I hope this history has been instructive.

When the Humanists say things like that to you SAY THIS:

Have you not noticed that as time goes on, technologies, more and more, seek to meet the remaining unfulfilled human needs! That’s why COBOL and any other technology either evolves, is augmented, or is abandoned–we constantly seek to meet the needs that are not fulfilled (of which a vast amount remain within the software engineering industry), at any given point in time. That probably explains also why technological improvement is gradual–it takes the recognition of needs to drive the satisfaction of needs, and most people don’t recognize needs until they meet them in their practice–until those needs are staring them in the face, or cramping them in the stomach–they don’t tend to think that far ahead.

I think COBOL is actually a tame example. 60 years ago people used switches to program. It then went through paper strips to arrive at the powerful punched cards. Punched cards only gave up the ghost 30 years ago — though I’m aware of people still doing it 20 years ago.

So using text to program, as we know it today, is the third incarnation of programming in 60 years. Sure, you had text representations for what you did with switches and punched cards — COBOL and FORTRAN, after all, where “text”, even though you fed cards to the damned computer.

And, really, we gave up on that already. Is there someone who programs for a living who doesn’t have COLORS helping him? One might argue he doesn’t place the colors there, the computer does, but that’s not really true. He KNOWS the software to be correct when the colors are correct, and, in this regard, yes, he MAKES the computer put the correct color, by the artifact of keying in the right letters.

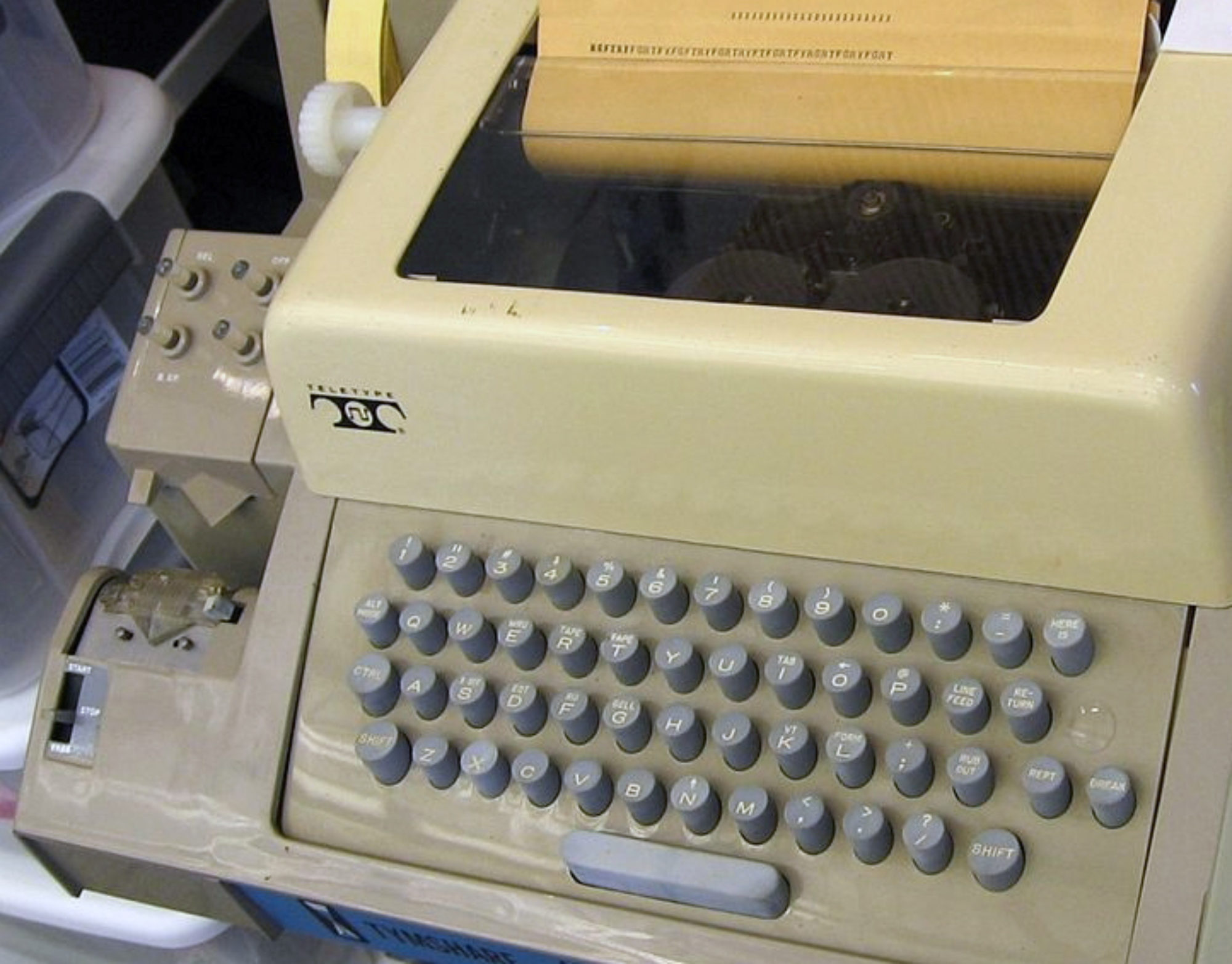

It might not seem a big deal to someone who doesn’t spend 8 hours a day writing a program, but, then, the difference between punched cards and writing text on a teletype is probably beyond them too. And if the subtext tables generate a text output, they won’t think it different either. They’ll just point to the text and say “see, it’s all the same thing”.

Now, I don’t think syntax must die. I think it must be aggregated with anything a computer can help with. We didn’t indent code for nothing. The blank space was there to make the horizontal dimension MEAN something, to the programmer if not necessarily the programming language.

So use tables, by any means. But use syntax too! And colors, and graphs, and whatever other means we make available. I can, for instance, think of using a third dimension to represent time or exceptions or nesting or abstraction.

Use everything available. What we DO know of programming history is that even when the best ideas fail at the market, they eventually show up in one of the winners down the road.

It’s irrelevant if Dvorak is faster or not, a faster keyboard design is unlikely to displace QWERTY because it simply isn’t going to be that earthshaking of an improvement.

The reason keyboards and musical notation aren’t a good argument against what you’re trying to do is this: once you learn Qwerty or musical notation, you know it, and can do what you need to do just fine, and learning some easier alternate method isn’t going to change much for you. This isn’t the case with programming languages. The flaws of the current paradigm over what we can imagine is possible isn’t something we overcome with time. Programming in the way we do continues to be error prone and a productivity drag. We can imagine enormous improvements over what we do now. A better comparison would be the old horse or mule drawn plow vs the tractor. The plow had complete market lock in for centuries, but when the tractor came along it was such an improvement there was no competition.

> QWERTY is certainly a case of lock-in, but it is a hardware standard, and thus constrained by the economics of production.

I use a keyboard with QWERTY labels, but I remapped it in software to use the Colemak layout. The corresponding solution for new programming languages is a good foreign function interface that allows you to call libraries written in other languages *and* allows you to write libraries that can be called from other languages. If you write a library in your language that I use in my language there is a good chance that I will try your language.

The only criteria for a new technology is: easy to use + way better. I’m still shaking my head at the success of GIT (and clones). Would have thought that source code control systems were a solved problem. Apparently not — although the ease of GIT adoption is key to its success.

Other examples are the Wankel rotary engine (never suceeded), and Postscript (mathematically elegant replacment for typography markup code). Postscript only half succeeded; mainly it caused HP to improve its printer markup language PCL to be good enough.